Relieable 3+km HD FPV solution

This post presents my first experiences using the wifibroadcast video transmission.

—

To be honest, this post is a bit contradictory.

The title uses the word “reliable” and later in this post I’ll describe my first three FPV flights where the last one ended in a really bad crash.

Analyzing the cause showed that it was actually my fault, not the transmissions one.

But before I come to the bad part let’s start with the good news which is a range test.

Extended range test

The last range test was a bit limited in terms of range since the area I was in was limited to 500m line of sight (LOS).

This time I had a car available and drove to a small hill where I’ve placed the video sender.

You can see a picture of the valley down the hill here:

I used the ALFA AWUS36NHA with the omni antenna that came with it as a transmitter.

The H264 data rate was 7mbps with a double retransmission.

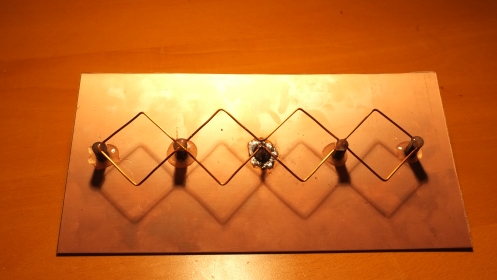

On the rx side I used the TP-LINK TL-WN722N with my home-made double biquad antenna:

The results were really astonishing.

I drove down the valley all the way to the end right before I lost line of sight.

At this point (at 3km distance to the transmitter) I had rock-solid video image.

Since the double biquad antenna is a directional antenna its sensitivity decreases when the transmitter is not in the center of the receive coil.

And again I was surprised that I could move the antenna +-30° both horizontally and vertically until I lost too many packets.

This is really good news: In case your quad stays in a radius of 3km you are safe to fly withing a 60° sector.

This also indicates that the maximum distance is way beyond 3km.

My guess would be that at least 5km should be possible.

I also tested a dipole antenna at the receiver.

This gave a maximum distance of 900m with the advantage of a 360° coverage.

The end of my last FPV flight

As I have mentioned before my last flight ended quite brutally.

And I have learned a lot from it.

Two main problems have caused that crash: Strong wind (30km/h) and blocked line of sight.

I was flying at a distance of around 200m against the wind.

When I turned around the quad was moving with the wind and suddenly lost height which blocked the LOS between the antennas.

The stream was quickly so disrupted that I could not guess its current position and height.

While I was trying to move the quad back to my position (I did not know that the LOS was blocked due to the height) the strong wind pushed the quad away horizontally.

When I (blindly) increased altitude the quad was already pushed away so far that the LOS was now blocked horizontally due to trees close to me.

I still received data but that was mostly unusable.

Maybe each 5 seconds a good frame blinked quickly and was then disrupted again.

When I lost contact completely I was forced to “land”.

The problem now was: Where is it? I knew that the battery was almost dead so the RC activated beeper would soon be quiet.

I ran around like a mad dog listening for the beeping.

After one hour I gave up and “accepted my loss”.

Back home I converted the received video stream of the crash flight to single images using gstreamer.

This way I was able to recover a more or less intact image 10s before the crash:

This image allowed me to localize the approximate position and finally I have found my quad sitting in a field (luckily the green antenna stood out of the long grass).

The impact took place at a distance of 700m to the rx station.

This means that the wind pushed the quad in 30s around 500m away from me…

Aftermath and conclusions

Although the crash was quite hard all the electronics and motors stayed intact.

Most likely this is a result of the sandwich construction that protects the electronics between two plates.

However, all of the 8mm carbon fiber rods that hold the motors broke quite brutally.

Under normal circumstances these rods are really durable.

So the crash seemed to be quite hard.

My conclusion is that a blocked line of sight using 2.4GHz transmission is deadly.

In the future I’ll take care that my rx antenna has a clear field of view of at least 180°.

Also my first reaction in case of a lost video connection will be to gain altitude.

This incident also motivates me to buy a GPS receiver that transmits the position of the quad and also provides an automatic “return to home” function.

PS: A little cheer up

For not letting this post end with bad feelings I show you the video of my first FPV-only flight.

I shot it using the ALFA AWUS36NHA in the air with a dipole antenna and a TP-LINK TL-WN722N also with a dipole on the ground. The video is decoded and displayed using a raspberry pi as shown here.

The occasional errors in the video are because I had the receiver in my pocket. Due to that my body blocked quite often the line of sight between the two antennas. The images are wobbly because it was quite windy that day (around 20km/h). I know, compared to other FPV videos this is sooo boring. But hey, it was my first flight 😉

Very cool! You should post on diydrones, I think there’d be a lot of interest. With the way you’ve created the link the mavlink telemetry could easily be sent on a different channel/port. They’ve already got a tutorial on how to connect a pixhawk to a pi (http://dev.ardupilot.com/wiki/companion-computers/raspberry-pi-via-mavlink/). Mission Planner on windows can use a stream from a video source but that may induce an excessive delay.

sorry, meant to ask about the jello in the video… any plans on how to reduce/remove it?

This occurs only if there is strong wind. This video has been shot with the same setup without much wind: https://www.youtube.com/watch?v=HeFe1mPJEmg Currently my camera is fixed to the frame. I think I’ll add some dampers if everything else is working properly.

Thanks for doing this. I made a small video using stock antennas to test out the flight-worthiness under glitchy conditions. The furthest distance I go in this video is around 480ft. This is where the transmission becomes barely flyable around the 2:40 mark in the video. I’m using 2.4GHz for now until my 5GHz hardware comes in. I’ll be testing this with a cloverleaf for TX (Alfa AWUS051NH 500mW) and 3-turn Helical for RX (TP-LINK Archer T2UH AC600). I’m using the TP-LINK TL-WN722N for RX in the video:

Do you think there is anything I can do to improve the quality at distance. I know I can increase the number of re-transmissions but this comes at the price of introducing latency. Here are the commands I’m using:

TX from Pi: raspivid -ih -t 0 -w 1280 -h 720 -fps 40 -b 3500000 -n -g 5 -o – | /usr/local/bin/tx -p 0 -r 4 -b 4 -f 1470 wlan0

RX on laptop: ./rx -p 0 -b 4 wlan0 | tee stream.h264 | gst-launch-0.10 -v -e filesrc location=/dev/fd/0 ! h264parse ! ffdec_h264 ! xvimagesink sync=false

Sorry but the video is marked as “private” so I cannot watch it. If you change that I’ll share some of my ideas how you could improve your range.

Hmm not sure how that happened. Should be ok now.

Ok, now the video works.

Your commands look good. A retransmission block size (-b) of 8 might improve things a little in case of surrounding networks. This however increases the latency a bit. But your scenario looks almost wifi-free.

The other thing I noticed is that the line of sight might be obstructed by trees. At close distance this is less of a problem but at your distance that might explain the effects you are seeing. I learned the line of sight fact the hard way (-> crash ;).

What could you do to make things better?

– Use better antennas. I think the standard TL-WN722N antenna is a half-wave dipole giving 2.5dBi. If you change both RX and TX antenna to full-wave dipoles at 5dBi this should already improve things a lot. My favourite is still the double biquad antenna on the RX side (giving 11dBi gain). But at 5GHz that is not really an option.

– Use ALFA AWUS36NHA at the TX side with the appropriate kernel patches. Unfortunately I haven’t yet compared that card with the TP-Link. In a separate scenario I tested two TP-Link cards with 2.5dBi dipoles. That maxed out at 300m with a clear line of sight.

– Fly in an area with less obstructions

But I guess you want to race with your quad? Doing that without obstacles might be a bit boring 😀

If you are starting to use 5GHz devices I would really appreciate if you report your results here. That might be of interest to many other people.

Thanks for your reply. I’ll post the 5GHz results as soon as I have them. Another issue that comes to mind is that I would like to use at least 2 antennas on the receiving side for diversity. However, I cannot find a USB Wifi card that does this AND supports monitor mode. Do you know of such a card? To work around this I was thinking if there is some way of getting the code you provided to take inputs from multiple cards, combine the useful signals together before pushing the data through stdout to gstreamer. I’m messing with the code a bit to achieve this but wondered if you can shed some light on it.

Ok, my 5GHz tests aren’t going so well mainly because the adapters I’ve tried are crap at monitor mode. Adapters that support monitor mode with external antennas on 5GHz for Linux are very limited. The only thing that shows small signs of working are Alfa AWUS051NH -> Alfa AWUS051NH (on channel 48 only, with lots of packetloss) or Alfa AWUS051NH -> TP-LINK TL-WDN3200 which works better on more than 1 channel and has just about manageable packet loss but no external antenna. I’ve tried an antenna mod with the TP-LINK TL-WDN3200 as detailed at: youtube.com/watch?v=6nViSfck468 with no success (can’t get the signal wire soldered).

Here’s an idea that I have come up with to possibly alleviate the limitation of Linux supported adapters for what we’re doing. On the TX Pi have 2 adapters. An Alfa AWUS051NH for 5GHz tx and another cheap and lightweight 5GHz adapter in AP/Ad-hoc mode which the Alfa will connect to and send packets to over UDP. On the RX side we have the same setup except we can use any Linux supported 5GHz wifi card (with external antenna ofcourse such as the Alfa AC1200 – doesn’t support monitor mode) and connect that to a cheap 5GHz adapter on the RX side. We make the AP/Ad-hoc adapters on both sides share the same mac address and figure out some way to make them not cross-connect at startup. The RX side should now capture packets sent from the TX but not suffer disassociation if it goes out of range.

Please let me know what you think of this.

Heh, the dual network thing is a creative idea. But there might be some hidden issues that make things complicated. The cross-association you mentioned is one thing. But collision of ACK frames could also be problematic. I think it /could/ work but there are many open “if” questions.

Concerning the ALFA cards: Did you debug the cause of the problems? The two combinations you tested suggest that the receiving ALFA has some issues. Maybe you could dig a bit deeper into the debugging using the following steps:

– Test the injection rate of the TX card: “cat /dev/zero | sudo ./tx” This gives some info on the TX capabilities. Does changing the rate (via iwconfig) affect the numbers of ./tx?

– On a second card look at the received packets via wireshark. There you can look at which rate the TX card sends it data. Also, if you set the tx retransmission count to1 you can possibly find the cause for dropped frames. The first 4 bytes of each wifibroadcast-packet contain a sequence number that increments. Finding this in the binary wireshark blob helps you to find drops. Maybe at the same point where the drop occurred another wifi card was sending? Or maybe something suspicious is there in the timing and length of the drops? Looking at such measurements sometimes opens your eyes 🙂

About the modding of the antenna, just buy MS156 pigtail and you don’t have to need to solder anything 🙂 http://www.aliexpress.com/item/MS156-TO-RP-SMA-Female-jump-cable-RG178-15CM-for-LTE-modem-Yota-LU150-1PC/641087483.html

Anyway, having more wireless cards on similar frequency (sometimes it’s not even needed) near together is not good idea. I’m working as network engineer and even miniPCI cards, which are designed for it, are able to interference together…

befinitiv

This looks great and I see some raspberry pis in my near future to try this. The one thing that I would really want to be able to replace my existing fpv setups is the ability to get the main camera through my fpv system. It seems no one has yet made the converter that would be needed–I saw a failed kickstarter Do you have any ideas as to how you might make a gopro or Sony Nex work with this in addition to the raspberry pi cam?

Marc

Very nice! 🙂

What about using miniPCI/miniPCI-E cards instead of the USB?

TP-Link is very cheap HW, so the quality is derived of it…

About the cards, I can recommend e.g. Dbii cards, they are really high quality, ubnt cards are also pretty fine. Also you can make 2×2 MIMO, which can even make longer range 🙂

I can’t recommend to use wifi with Broadcom chips…

How would you connect this to a raspberry pi?

Hi,

I got interested in quadropters just now, and I would like to have one which can take a payload of 500g (half kg, 1.1pounds), and I would use it spray water onto my trees.

Which forum would you recommend to start with?

I don’t want to end up with a crappy quadropter, which I need to replace it in the end.

Hope, it is not too naggy/spammy comment.

Best,

Laszlo

Hi Laszlo

I agree, it is quite difficult to choose the right platform. I also had problems with this. You might try diydrones.com but I’m not 100% sure if that is the best address for you. Sorry 😉 My selection was driver by price. I looked for the cheapest flight control and found the Naze32

Hi befinitiv,

First of all you great. Kindly thank you for all your effort with such useful project.

I am not shure if it still the case but (it would be sad to have HD streem actualy VGA upscaled to HD):

The sensor modes that are used by Pi are

1080P30 cropped 1-30fps mode

5MPix 1-15fps mode

5MPix 0.1666-1fps mode

2×2 binned 1296×972 1-42fps

2×2 binned 1296×730 1-49fps

VGA 30-60fps

VGA 60-90fps Whilst the Omnivision datasheet may claim 720P60, they never

provided us with register settings for it, or we ignored it as it had a

hugely cropped field of view. If you request 1280×720 at 60fps on a Pi,

you will get VGA off the sensor and it will then be upscaled to 1280×720.

More discussion from when the extra modes were released on the forum, eg

https://www.raspberrypi.org/forums/viewtopic.php?f=43&t=62364&start=250#p520078,

https://www.raspberrypi.org/forums/viewtopic.php?f=43&t=72116 and

https://www.raspberrypi.org/forums/viewtopic.php?f=43&t=85714&p=605259

Hi Befi. Could you post a step-by-Step tutorial how to patch the drivers for the ALPHA. There are several tutorials arround but some do not seem to work …. I get only about 200m out of my Setup (TX=Alpha, RX=722N) — Many thanks in advance. Cheers! Thomas

If you have time, could you describe how you made your antenna and connected it for the higher gain? I’m researching on how to make a system to transmit about a kilometer and a half. Both the transmit and receive location are fixed. And line of sight. And with that antenna you made I should have the range to be able to do it.

Thanks again! Great work! Sorry about the drone.

There are lot of instructions on the web how to make double biquad antenna for wifi.

If you need even more range, you must choose an antenna with narrower beam, e.g. yago (16-18 db) parabolic grid antenna (+20 db), but those would require automatic antenna tracker for precice aiming.

If both locations are fixed, things are easier, because you can easily use directional antenna on both ends (e.g. biquads) without tracking.

Just out of curiosity, did you use only 20 dBm of transmit power for 3 km range or was your test site located in Bolivia? If the former is true, it opens really interesting possibilities with narrower-beam antenna (grid) and/or higher power levels (where legal). Not that 3km wouldn’t impressive & enough for most casual use.

Those are Bolivian ranges.

After spending 120€ to 4 * AWUS036NHA cards, it’s really bugging me why they cannot output more power than pathetic 22 dBm even with crda/driver patched, despite the card having SE2576L power amplifier chip (max 29 dBm). There is some evidence[1] that even TL-WN722N can output the same power without external amplifier chip.

This makes me wonder if the SE2576L amplifier is actually used at all. I’m not sure if it’s bidirectional amplifier, i.e. can boost both ingoing and outgoing signal. Maybe it’s used only for input boosting, giving the NHA it’s notorious ability to work reliably on low-RSSI connections?

If you look at the SE2576L datasheet[2], the chip has Power Amplifier Enable pin. I’m not sure if it disables the whole chip or just the amplifier part (passing the signal through without extra amplification?).

If some AR9271 cards have external amplifier while some don’t, where’s the logic driving the amplifier? Apparently not in ath9k driver, because it’s generic driver for all AR9271 cards. Is the logic in the wiring itself requiring no software support? The SE2576L is very simple chip, more like an analog chip, there are no any kind of protocol needed. The AR9271 chip is notorious to be very hackable[3], i.e. almost all the functionality is implemented in (open source) driver/firmware.

This all makes me think that maybe the amplifier functionality of SE2576L is not enabled at all. AR9271 has several GPIO pins which could be used to drive the EN pin of SE2576L. If you inspect the NHA pcb[4], the EN pin leads to via[5], so cannot directly see where it’s connected on AR9271 side, but GPIO14 seems most prominent, also leading to via[6]. Quick test with multimeter confirms this, although my test probe was too thick to pick that particular pin reliably.

The AR9271 datasheet[7] tells what register you have to poke to enable high output on GPIO14 pin. I found no evidence the at9k doing this (not surprise, as it’s generic driver). I guess it should be relatively simple to test my theory by mofidying the ath9k firmware[8] to set GPIO14 high, but I don’t have time right now to set up the build environment on my rpi. Just wanted to share my theory. I’m not an EE or anything and it’s possible (if not likely) that I’m wrong.

Btw, why would Alfa wire up a power amplifier chip if it’s not used? Maybe it was enabled in their driver until wifi regulation got too strict, or maybe the chip is there just to back their “1000 mW” claim (although SE2576L is more like 800 mW). Or maybe it’s used only for input signal amplification.

[1] http://yo3iiu.ro/blog/?p=1301

[2] http://www.skyworksinc.com/uploads/documents/202428A.pdf (SE2576L datasheet)

[3] https://docs.google.com/presentation/d/1CofNlbHs2bLdJuW3VACzAImqesGQoktUTXS6zKgDDKI/edit?pli=1 (2014 Defcon Presentation – Atheros HAL)

[4] http://jaakko.kapsi.fi/AWUS036NHA/AWUS036NHA_overview.jpg

[5] http://jaakko.kapsi.fi/AWUS036NHA/SE2576L_closeup_on_AWUS036NHA.jpg

[6] http://jaakko.kapsi.fi/AWUS036NHA/AR9271_GPIO14_closeup_on_AWUS036NHA.jpg

[7] http://www.cqham.ru/forum/attachment.php?attachmentid=155133&d=1383397504 (AR9271 datasheet)

[8] https://github.com/qca/open-ath9k-htc-firmware

PS. Sorry for low quality close-up photos of the NHA board. I used iPhone with very cheap magnifier lens. By your own eyes (with the help of magnifier) it’s easy to follow the traces, though. The photos are only for general interests.

Another idea in totally unrelated topic: OpenWRT contains a patch for ath9k which enables 5/10 MHz bandwidths. I’m not sure if AR9271 hardware supports those but I guess it does, since it’s only matter of setting some clock freqs. 5 MHz bandwidth can give stronger signal by many dBm (vs. 20 MHz) because the RF energy is more concentrated. The tradeoff is lower bitrate thoughput, but still enough for our application (transmitting H264 bitstream). This would be really interesting hack to test out.

Definitely the AR9271 is very potential chip for skilled enough programmer (which I’m not), because of the open firmware and very few hardware restrictions[3].

[1] https://dev.openwrt.org/browser/trunk/package/kernel/mac80211/patches/512-ath9k_channelbw_debugfs.patch

[2] http://research.microsoft.com/pubs/73426/ChannelWidth.pdf

[3] https://docs.google.com/presentation/d/1CofNlbHs2bLdJuW3VACzAImqesGQoktUTXS6zKgDDKI/edit?pli=1

Is there a way to pipe output video data from OpenCV through instead of the Raspivid? I have attempted to and I no longer get the issue where fifo0 has no data, but I am not receiving anything on the receiver pi.

Any help would be appreciated. Thanks!

Hi Matt

Could you share some (rough) code so that I can better understand what you are trying to achieve?

Sure thing. I can get the video to display on the transmitting pi device, but when I try to transmit it I am having issues (probably because I havent piped anything before).

Here are the relevant chunks of code that call the camera feed, setup the image frame to be displayed, and then where I try to send it to stdout to pipe it to tx.

#where I am pulling the video from the pi camera

vs = VideoStream(usePiCamera=True).start()

#where I am setting the “image_for_result” variable

frame = vs.read()

image_for_result = frame.copy()

#Where I am displaying the video feed after applying ROI to objects

cv2.imshow(“Output”, image_for_result)

sys.stdout.buffer.write(image_for_results.tobytes())

In the transmission function (tx.sh), I am just calling “sudo python detect.py | ”

where detect.py is my OpenCV script.

Your code would just write the raw image contents to stdout. Raw meaning the raw pixel values.

With this there are actually several problems:

1) The data size of raw images is too high to transfer them in real-time over wifi. So you might be able to transfer very small images in real time or very low frame rates

2) Your code just writes out a vector of bytes. But you need much more than that to interpret the data as an image. You need to know the width and height, the color format (bayer, rgb, yuv, …) and the bitwidth. All this is not transferred in your sniplet. For doing so, you would need to serialize the image so that you generate a vector of bytes that includes all needed fields. On top of that you would also need to packetize this stream, so that the receiver can determine the beginning of an image.

All the things above are automatically done if you are using the h264 data. So if you do not need to access the pixels on the TX, you should use h264 directly.

sorry that last line was supposed to say I am calling “sudo python detect.py | normal tx function here”

The comment format didnt like when I tried using the greater than and less than symbols

I believe OpenCV has the ability to convert and output the video as h264, though I will need to double check. So as long as I can ensure it can and can have it output the video to stddout I should be good?

To give some perspective, I am involved in a project that is supposed to make an autonomous drone that is capable of detection and tracking with a “stealth tether”, which just means it cant send out any signals to the ground vehicle we are following (like lidar, sonar, etc.).

I was hoping to utilize this system to enable video transfer of the tracking ROI box from a large distance that hosting an access point on the system couldn’t achieve.

Thanks for the tips! I will try to get it working and post back if I have good results.